Op-ed by Dave Sierra and Scott Shadley, Solidigm.

The AI boom is a thirsty business. By 2028, AI data centres are projected to drive an 11x increase in global water consumption, potentially reaching more than a trillion litres annually. As global leaders look for ways to shrink the environmental footprint of technology infrastructure, the industry has championed water-saving liquid cooling for high-power GPUs.

While the terms ‘liquid cooling’ and ‘water-saving’ at first might seem contradictory, the distinction in this case is that the liquid in question is typically isolated and reused in a closed-loop IT equipment cooling system. What’s often overlooked? Today’s AI system solutions may be leaving a massive chunk of hardware stuck in the past, at the potential cost of billions of litres of water per year.

The hybrid cooling trap

Right now, many state-of-the-art AI servers are being deployed in what is known as a ‘hybrid’ cooling configuration. The ultra-hot GPUs get the VIP treatment with highly efficient direct-to-chip (DTC) liquid cooling. But the Direct Attached Storage (DAS) – the SSDs physically sitting in the server right next to those GPUs, feeding them oceans of training data – are still being cooled by traditional spinning, mechanical fans.

It’s like buying a sleek, state-of-the-art electric performance car, but strapping a loud, gas-guzzling generator to the roof to run the air conditioner.

Why is this bad for the environment? Because cooling storage with air requires the use of fans in the server. These spinning fans act as a ‘parasitic’ power drain on the system (energy that isn’t used to power the drive itself), adding unproductive overhead to your storage power use. But a key result here is water waste.

The heat generated by the drives must be removed from out of the data centre. For many facilities, the solution is to absorb that heated air into a piped water system, which carries that warm water outside to massive evaporative cooling towers. More critically, these towers evaporate clean water into the atmosphere to remove heat.

In a world where data centres are increasingly sharing and competing with local communities or agriculture for stressed water supplies, relying on evaporative cooling for IT equipment is an environmental liability.

Cutting the fans – the 100% liquid solution

The overlooked environmental opportunity lies in moving your DAS from this less-than-optimal hybrid to 100% fanless, liquid-cooled architecture. Solidigm has worked with NVIDIA to address SSD liquid-cooling challenges, such as hot swapability and single-side cooling.

When you replace bulky, air-cooled storage with the Solidigm D7-PS1010 9.5mm cold-plate-cooled eSSD, the fans disappear. The heat from the SSDs transfers directly into a closed-loop liquid cooling system — conducting heat thousands of times better than air and potentially driving water usage close to zero.

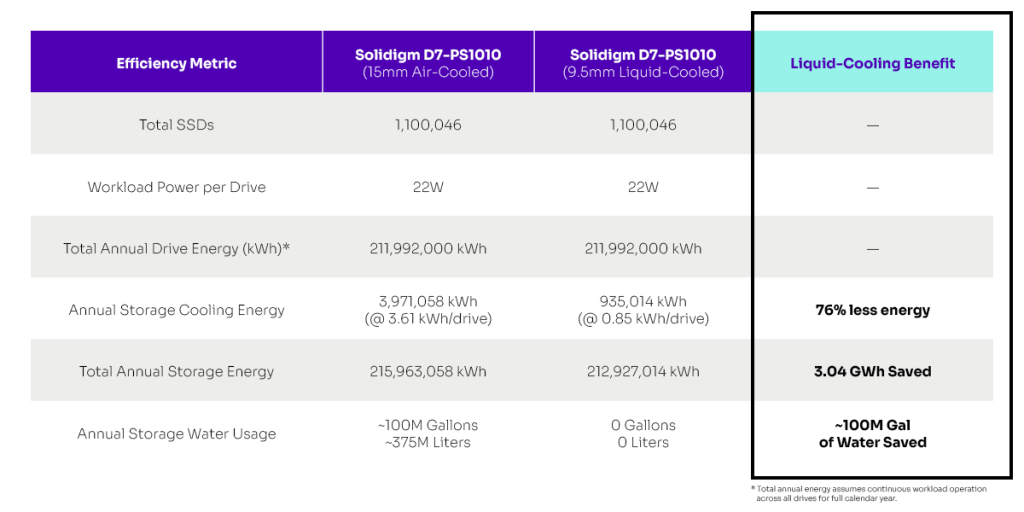

Let’s look at the actual impact of this switch at a 1-gigawatt (GW) data centre scale. If you compare a high-performance air-cooled eSSD to the exact same liquid-cooled eSSD in a modern GPU system, you aren’t just saving a little bit of electricity. You are slashing the cooling energy required for those drives by about 75%, from 3.61 kWh per drive per year down to just 0.85 kWh.* Across a massive 1GW facility, that reclaims gigawatt-hours of cooling energy.

The above table compares the air-cooled vs. liquid-cooled storage efficiency metrics for two Solidigm SSD configurations deployed in a 1-gigawatt AI data centre. Key liquid-cooling benefits are highlighted.

But the water impact is where the real environmental dividends really kick in. By moving the storage heat load to the ‘closed’ liquid loop, a facility can entirely bypass those thirsty evaporative cooling towers, bringing water consumption for DAS down to zero.

By eliminating hybrid cooling inefficiencies at a 1GW scale, you can save roughly 100 million gallons of water annually compared to running the exact same high-performance storage on air.

To put that number in perspective: that is enough clean, reclaimed drinking water to fill over 155 Olympic-size swimming pools every single year.

The bottom line

We can no longer afford to treat storage as an afterthought in data centre thermal design. Brute-forcing air across dense, high-performance SSDs is a waste of energy and a drain on one of the planet’s most precious natural resources. And water savings don’t stop at the cooling plate.

By consolidating Network Attach Storage (NAS) datasets onto fewer, higher-capacity SSDs, data centres can meaningfully shrink their total power footprint – and with it, their demand on evaporative cooling towers. Less energy in, less water out. This space is evolving, so watch closely.

The takeaway this Earth Day, and every day, is simple: if you’re not considering 100% liquid-cooled for your storage, you’re not taking advantage of the full environmental benefits offered by liquid-cooled eSSDs.

About the authors

Dave Sierra and Scott Shadley lead AI Infrastructure Efficiency at Solidigm. In addition to the strategic planning and marketing of efficient solutions, their team is dedicated to working with key technology leaders to better enable the shift to more efficient AI systems. Solidigm leverages decades of product leadership and technical innovation, collaborating with customers to transform their business and propel them into the data-centric future. Learn more at www.solidigm.com.

* Source: Solidigm. Results are calculated from airflow and pressure drop measurements of fluid in a typical server fan/pump condition for 35C air and 45C liquid inlet temperature while running worst thermal workload for 8x E1.S eSSDs.